People undertaking a project delivery role require at least an awareness in verification and validation and of its implications for their own role, in particular.

For a programme or project, the senior responsible owner is accountable for ensuring a verification and validation management framework is in place and that it is effective.

The programme or project manager, as appropiate, is responsible for ensuring the verification and validation strategies and methods are being managed and used.

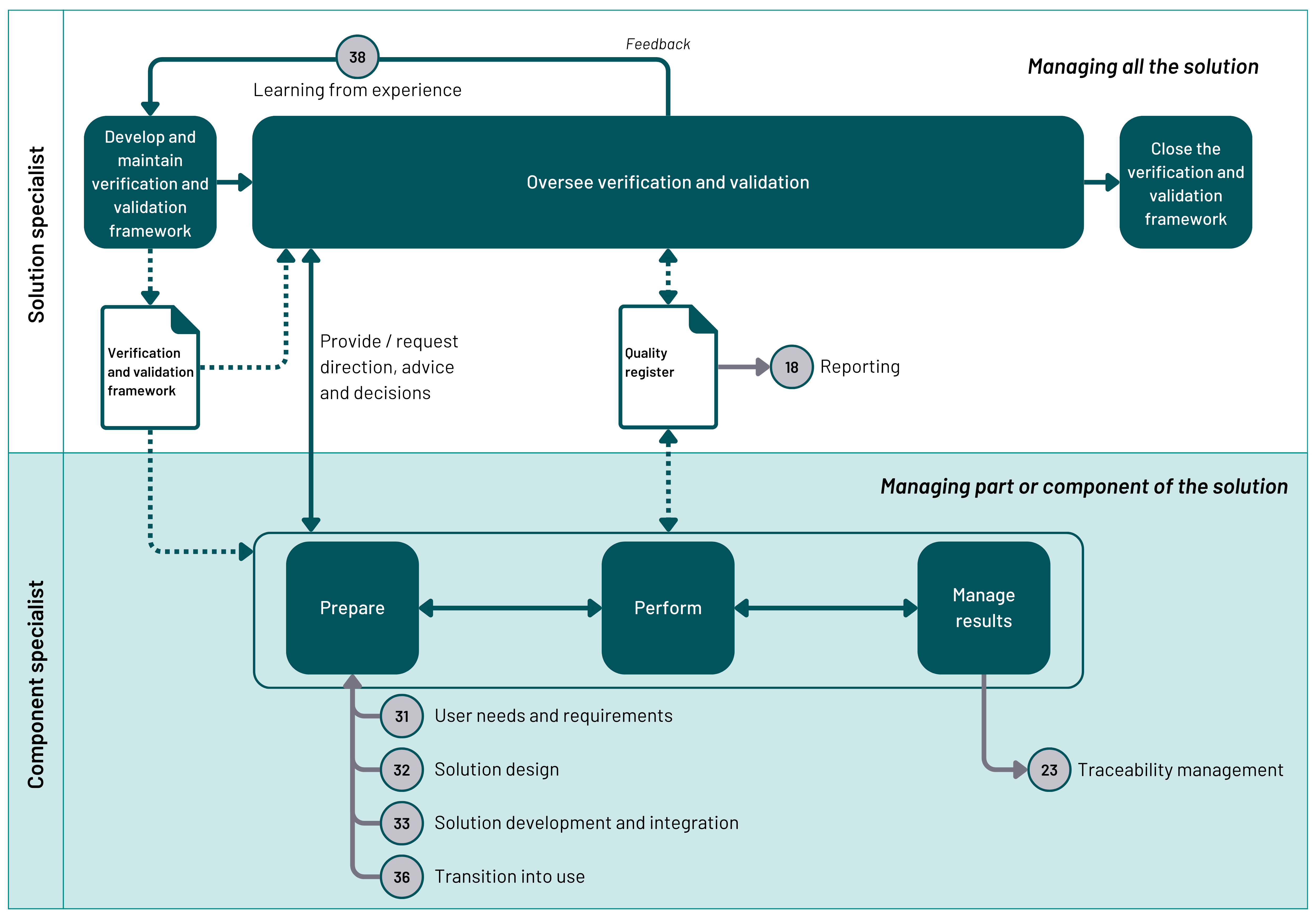

The verification and validation strategies and methods should be defined and owned by a solution specialist working with a designated component specialist for each output or group of closely-related outputs. The responsibilities normally follow the solution hierarchy (sometimes called a system hierarchy or product breakdown structure). The role titles for people who manage verification and validation differ widely, depending on the type of output and methodology used.

For the programme or project manager to fulfil their responsibilities, they need to have access to verification and validation records and to understand the implication the results have on the completion of the work and the likelihood of achieving the objectives. The senior responsible owner is accountable for the programme or project achieving its objectives at an acceptable level of risk and therefore needs to be kept updated on issues that could threaten the achievement of those objectives.